From Clipper Chip to Smartphones: Unlocking the Encryption Debate

34:05 minutes

In 1993, the National Security Agency introduced the Clipper Chip, an encryption device that would protect personal communication and provide a backdoor for the government to gain entry to that information. But by 1996, the Clipper Chip was never widely adopted and was ultimately shelved.

During those three years, Science Friday checked in with security experts and privacy advocates to discuss the debate about the role of government in setting encryption standards and how to balance privacy and security. Two of those guests—security expert Dorothy Denning and privacy advocate Marc Rotenberg—rejoin the program to discuss how far the encryption debate has come from the dawn of the digital age and into the era of Apple.

Dorothy Denning is a Distinguished Professor in the Department of Defense Analysis at the Naval Postgraduate School in Monterey, California.

Marc Rotenberg is President of the Electronic Privacy Information Center in Washington, D.C..

IRA FLATOW: This is Science Friday. I’m Ira Flatow. The encryption battle between Apple and the FBI seems to take a new twist every week. Last Monday the FBI said they reportedly might not need Apple’s help after all in breaking the iPhone. The feds reportedly found a third party to create a digital back door into the phone.

But this has not been the first case about getting into locked items. Back in the 1990s, there was a different crypto war raging. The FBI, technologists, and privacy experts were fighting over the clipper chip. You remember the Clipper chip? It was introduced in 1993 by the NSA.

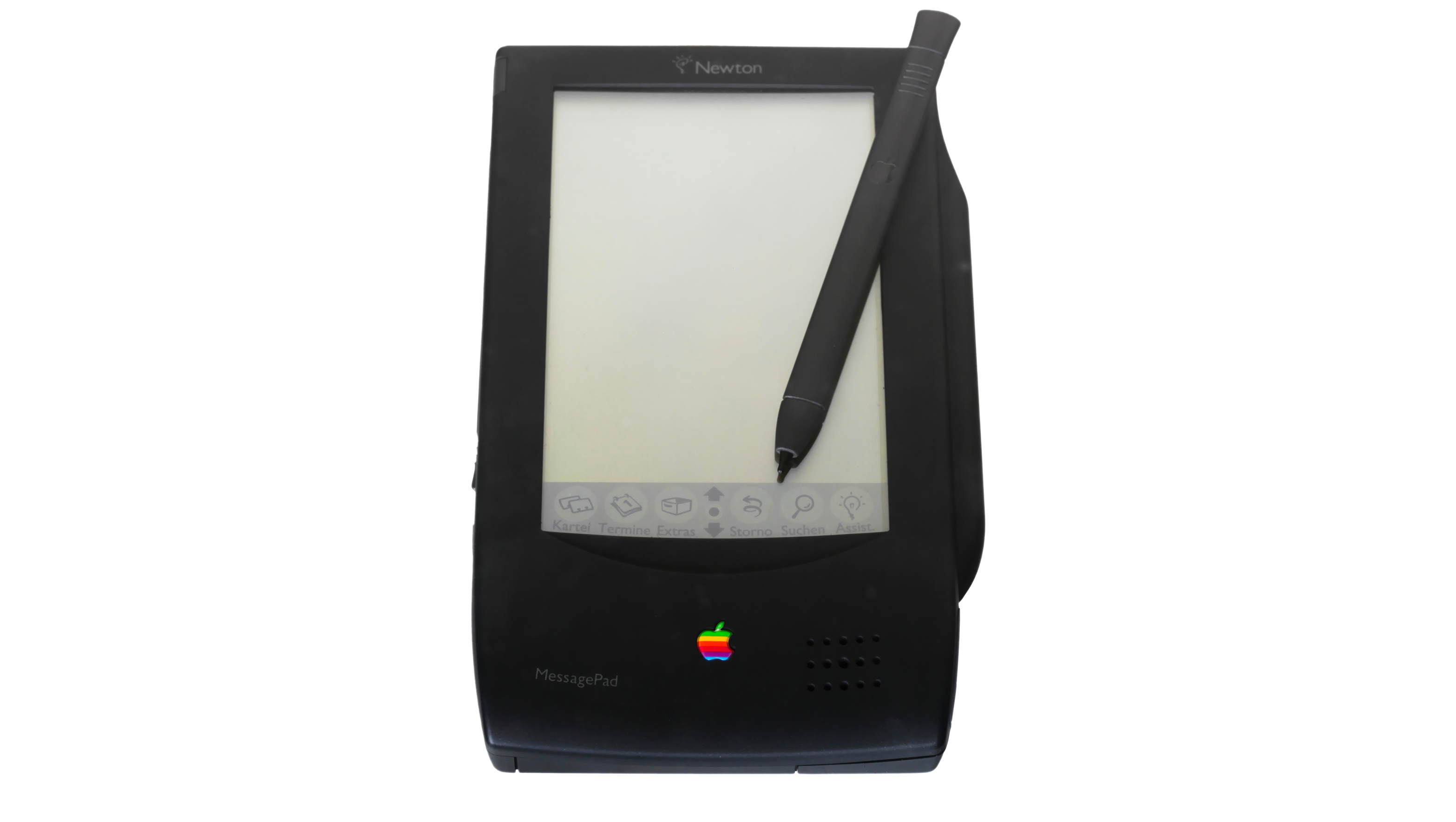

The device could encrypt the information on your fax or your telephone. The Apple Newton PDA, which came out about the same year, and it also would provide a back door for the government to get into the device, remember? That sound familiar now? Long story short, that Clipper chip was never implemented and it died a quiet death.

While Crypto War 1.0 was playing out, our next guest joined us in 1993 and ’94 to weigh in on the debate, and they’re back today over 20 years later to crack open the latest Apple case. In the ’90s Dorothy Denning was the professor and chair of computer science at Georgetown University. These days she’s a distinguished professor of defense analysis at the Naval postgraduate school in Monterey, California. Welcome to Science Friday, welcome back.

DOROTHY DENNING: Thank you.

IRA FLATOW: Marc Rotenberg was the director at the Computer Professionals for Social Responsibility. He was just beginning his current position as president of the Electronic Privacy Information Center in DC. Welcome back to you, too.

MARC ROTENBERG: Hi, Ira.

IRA FLATOW: Mark, you’ve been involved in this debate since the early days of the crypto battles. You joined us on that show in 1993 to talk about technology and privacy, and here’s what you said.

MARC ROTENBERG: I certainly think that companies should pursue good security policy. But I think the government has to be very careful in its role in this area. There are other interests, as we know, that the government might have in the design of network systems. And we get into a dangerous ground, really, when the government starts enforcing standards or compelling certain activities with private companies.

IRA FLATOW: That sounds like the conversation we could have today, Mark, isn’t it?

MARC ROTENBERG: Could have been last week.

IRA FLATOW: It’s what happens over 23 years. Are you surprised how far we’ve come, or we haven’t come?

MARC ROTENBERG: Well some of it feels very, very familiar, and in other ways it’s very different. When we started this more than 20 years ago, I think there were only a small number of people who really understood the importance of encryption for privacy, for security, for the growth of the internet. And it was a group of technologists, computer security experts, people who had helped design key encryption protocols. And it was also a group of civil liberties lawyers who were concerned about the possibility that the government would build in this backdoor architecture of surveillance.

So when we started it was about 40 people, I think, who wrote to President Clinton back in 1994 and said, please don’t go forward with this. We think it will be a mistake. And of course now it’s almost a very similar debate around Apple and the FBI.

IRA FLATOW: And why was the Clipper chip, then, not implemented? Was it pressure from the public?

MARC ROTENBERG: Well I think it was a whole range of reasons. I mean our letter, which started with 40 people, became, in fact, the first internet petition. We put it online and people wrote to us and said wow, I don’t quite understand what Clipper is or what encryption is, but this sounds really important for the future of the internet. And over the span of six weeks we got 50,000 people to sign up, which in those days was actually a lot of people. It was practically half the internet. I mean nowadays that’s like a Facebook group or something, but back then it was a broad showing a public support.

Industry groups got involved. Some of the technologists looked closely at the protocols, I recall Matt Blaze, for example, looking at a protocol that the FBI had put together to try to implement a Clipper and basically hacked it, and said this isn’t going to work, and I can break it. And other people, as well, weighed in. So it was a very interesting debate. The public, the technologists, the business groups, leaders in Congress.

There was even an international dimension because we had others outside of the US expressing concern about this type of network surveillance.

IRA FLATOW: Mhm. Dorothy, you wrote your book Cryptography and Data Security in 1982, and it was one of the first books about algorithms and data security. What’s your take on how the encryption debate has evolved?

DOROTHY DENNING: Well, you know, actually I had kind of thought the debate was over. That we had decided as a country that it was important to go forward with secure systems, and that we would not be mandating back doors or anything else in our technology to allow access. So I was actually kind of surprised that the debate came back.

IRA FLATOW: Wasn’t the debate different, though, back then if I recall? I mean the debate back then was security on two ends of a pipe. You wanted to send some papers about your industry or your corporate papers secretly to someone else who couldn’t tap in, and it may have been corporate secrets, things like that. We weren’t talking about terrorism back then, were we?

DOROTHY DENNING: Not so much, but the Clipper chip was actually, they did actually implement it, and they put it in some phones. And it was basically a technology for phones that went into an AT&T secure phone device. Because at the time, AT&T was planning on putting in a chip that would not allow government access and the government talked them into putting one in that would provide access to the keys. And so they made the chip, they produced some phones, but nobody bought them. Except for the Justice Department, because the Justice Department I guess agreed to by someone when they talked AT&T into making the switch. But nobody else wanted it, was I think that’s why it died. And for, you know, lots of reasons.

IRA FLATOW: Number 844-724-8255. You can also tweet us @scifri, talking about the history of digital encryption. Marc, when you joined us in 1993 to discuss the FBI’s wiretap proposal at the time. And here’s a little clip from that discussion.

MARC ROTENBERG: The FBI should expect the assistance of the telephone companies when they are in possession of a lawful warrant. The debate during the past year in Congress about the FBI’s proposal I think goes to a much deeper question, which is whether the FBI can expect the telephone companies to design the network equipment so as to facilitate wire surveillance in the future.

IRA FLATOW: What happened to that proposal?

MARC ROTENBERG: This is turning into like the golden oldies, Ira.

IRA FLATOW: That’s exactly right.

MARC ROTENBERG: I’m trying to think what else we talked about in the 1990s. But it really was the key issue, and it is the issue that’s playing out now almost exactly in the Apple FBI dispute. I think simply stated Apple would say listen, I mean we have complied with that lawful warrant, we have turned over the information that’s relevant to the investigation that we have in our possession. But what you’re asking us to do now is to cross a line and to design a new technique to enable access, not only to this phone, but presumably to any other iPhone you might be interested in.

And that was almost the exact same debate we were having back in the early 1990s over the telephone network. The FBI said we’re running into some trouble, some of the new technology is making it difficult for us to execute a lawful warrant, and we want the telephone companies to ensure that when we have that lawful warrant, we can get what we’re going for. And you know, I think Congress made a mistake in 1994. They largely accepted that argument and they passed an act called the Digital Telephony Act. But they also drew a line, and they said well let’s do this for the telephone network and hold off on the internet because it’s really not clear what’s going to happen there.

Apple was actually able to point to that in their legal response to the FBI when they said look, Congress back in the 1990s considered whether internet companies should have to do this and basically said no. So even a bit of that history played out over the last few weeks in the dispute over the iPhone.

IRA FLATOW: Oh so they did go back that far and say this has already been talked about.

MARC ROTENBERG: That was actually, that was a key part of the Apple argument, because the government had cited a law from 1789 called the All Writs Act, which is a way for the government to try to compel conduct by private actors. And Apple said wait just a moment, before we’re talking about that 18th century law, we really should be talking about the more relevant law from the 1990s when Congress actually looked at this issue.

IRA FLATOW: And in fact, I want to play for you, because we are going back to golden oldies, Dorothy, how you described the encryption debate taking hold in the public.

DOROTHY DENNING: Encryption has generated a big yawn from society, and I think that’s gradually changing. Most of the drive for it, I think, is going to come from industry who is concerned about theft of intellectual property.

IRA FLATOW: And Dorothy has that played out?

DOROTHY DENNING: Well you know I think that the individuals are probably at least as interested as industry in encryption. So it’s something that a lot more people are interested in now then before. I don’t think it’s a yawn anymore. As Mark pointed out earlier, there’s so many more people online now, on the internet. It took off with electronic commerce and so many other things that were not so much of an issue back then.

We didn’t have people buying things on the internet at places like Amazon, and other places, and trading on eBay and so on. None of that was happening back in 1993 when the Clipper chip was proposed.

IRA FLATOW: Yeah. And in fact in the ’90s a cryptography was classified as a munitions, it was illegal to export it, Marc, this is what you said.

MARC ROTENBERG: But there’s a problem with this export policy. One is that foreign companies can develop these products. The second is that many of products are currently available. So we have a situation where, for example, the export of a popular cryptographic method called DES, or D E S. Is restricted by the United States government so they cannot be obtained by foreign countries, but it can be purchased on the streets in Moscow.

IRA FLATOW: Marc how much different is– it doesn’t sound very much different than what you might argue today.

MARC ROTENBERG: Right, in fact in the current debate a famous cryptographer Bruce Schneier did a survey of the international availability of cryptographic products. I think he found over 800 different types of products available outside of the US. And the point that Bruce was making, and that we had made previously, is that even when you try to impose these kinds of restrictions domestically, it doesn’t actually solve the problem of foreign availability.

I think that’s also in the back one of the big concerns that people on the security side have. We could actually make US internet users more vulnerable by weakening the encryption that’s available to them while users outside of the United States would actually have better protection if they weren’t facing those same kinds of restrictions.

IRA FLATOW: Dorothy we asked you back in 1994 your thoughts about the government’s stance on the Clipper chip, and this is what you said.

DOROTHY DENNING: Were the government to take its resources and put out there an extremely good standard that thwarted law enforcement, then of course criminals and everybody else would use that and gleefully be able to conduct their communications with complete impunity. The government thought that was irresponsible, and I fully agree. I mean I think that knowing what we know now about the impact of these technologies on law enforcement, given that technology was at hand, to do it in a way that would allow us to have that law enforcement access without jeopardizing security and privacy.

IRA FLATOW: And what do you think about that today, Dorothy?

DOROTHY DENNING: Well I think we need all the help we can to make our systems secure. I’d like to see the government more focused on helping achieve that rather than wanting to break it.

IRA FLATOW: We’re talking about encryption on Science Friday from PRI, Public Radio International. I’m with Marc Rotenberg and with Dorothy Denning, and we are talking about going back into the way back machine, back in 1994, 1993. What do you think the biggest– has there been an evolution in the thought, Marc, since 20 years ago in the value of encryption?

MARC ROTENBERG: Well I think Dorothy actually made this point very well just a couple of minutes ago when she was talking about the dramatic expansion of the internet as a platform for commerce. That was just around the corner in the early 1990s. The first web browser was about to be released, and some of the first internet based companies were about to launch their services. But that hadn’t happened yet. And so we were still thinking mostly in terms of communications. We were thinking in terms of a digital complement to traditional analog telephone service.

As the economy, the country, the internet ecosystem moved toward a much more commerce driven model, encryption actually became more important. Because of course now people were putting credit card numbers online and there was a risk of hacking, there was a risk of financial fraud. And then the other big development over the past decade has been the emergence of cloud based services. So now you have a lot of personal data being stored on remote servers, which is oftentimes the target for criminal hackers as well. And there again encryption becomes very important to ensure security and to protect financial assets.

So I think what’s happened over time is that people become aware that encryption is probably even more important than we thought it would be 20 years ago.

IRA FLATOW: Dorothy is the Clipper chip debate the same fight that’s happening between Apple and the FBI?

DOROTHY DENNING: I don’t think it’s exactly the same fight. But I mean back then the Clipper chip was not being put forward as a mandate, and so the government wasn’t saying that everybody had to use the Clipper chip, it was a voluntary thing. I think the big question on the table right now is the extent to which industry has to be able to provide access to things. Is that a mandatory requirement or not.

IRA FLATOW: People don’t realize that, the voluntary part of that. Because you could voluntarily include it in your fax machine or your phone.

DOROTHY DENNING: You could. Well you know, frankly, I’d just as soon that somebody be able to provide access. So that if I lose whatever information, my password or whatever I need in order to get access, that I haven’t irrevocably lost access to all my data. But I can appreciate that other people don’t care about that.

IRA FLATOW: All right, we’re going to take a short break and take some phone calls. A lot of people online want to move this forward and talk a little bit about the Apple FBI case, so we’re going to do that a little bit. Our number 844-724-8255. You can also tweet us @scifri S-C-I-F-R-I. We’ll take a break and then come back and talk about lots more with Marc Rotenberg and Dorothy Denning. Stay with this, we’ll be right back.

This is Science Friday, I’m Ira Flatow. We’re talking this hour about encryption. We’re talking about the history of encryption in the digital age, starting with the Clipper chip back in the ’90s, and now we’re going to move forward a little bit about the Apple and FBI case with my guests Dorothy Denning, she’s distinguished professor in the department of defense analysis at the Naval Postgraduate School, that’s in Monterey, California. Marc Rotenberg is president of the Electronic Privacy Information Center in Washington DC.

Let’s talk a little bit. We have a lot of calls, let me go to the phone calls because there are a lot of good ones here that people would like to talk about. So let’s just go right to them. Let’s go to Stockton, California to Joshua. Hi, welcome to Science Friday.

JOSHUA: Hi, thanks. So my question is if the FBI does win this case, and Apple does have to create some sort of software that lets the FBI get into every iPhone, what would stop Apple just on the next update, or with their next iPhone, creating better software that would negate this new application?

IRA FLATOW: OK. Dorothy, Marc, any comments?

DOROTHY DENNING: Yeah, I don’t think it would actually affect what they did in the future, I think, at least what the FBI is asking for right now, just would apply to that one phone.

IRA FLATOW: All right let’s go to another call, Kevin in Portland, Oregon. Hi Kevin.

KEVIN: Hi, thanks for taking my call. And thanks for covering this issue, as well. So I just saw an interview this morning with [INAUDIBLE] this morning, and he’s saying that basically the whole thing between Apple and the FBI is kind of a theater of the absurd, because the hackers that work for the NSA would have no problem hacking into any of these phones. They kind of laughed at the idea that they need a back door.

So I’m just wondering– basically the issue is being used by the FBI kind of as a precedent to be able to get into any phone when they want to. And so I was just wondering if either of your guests would have any comments on that.

IRA FLATOW: Marc, you have a comment on that?

MARC ROTENBERG: Well certainly some have speculated that the FBI did take this case following the tragedy in San Bernardino to try to establish a favorable legal precedent. Basically they wanted to have a court ordered US company to design a way to hack the phone. I think ultimately they decided that was probably not a good battle to pursue, and so they appear to have backed off.

But as to the caller’s question, whether the NSA also has the ability to hack the phone, I think, is a very interesting question. I’ve been thinking about that myself. I mean it is, of course, the top code cracking agency in the world. They have extraordinary resources, extraordinary skills. Personally I would be surprised if they couldn’t break the phone, but that’s not yet been established.

IRA FLATOW: If they could break the phone, is the information they would find on it still relevant? I mean, you know, the people on there, the contacts, whatever, wouldn’t they all be gone? And changed, and moved?

MARC ROTENBERG: Not necessarily. There’s an interesting back story in this investigation, which is that this iPhone, like many iPhones, are routinely backed up to the Apple server, to the cloud. And in fact when the FBI came to Apple, they were able to pull off the backup of the phone up to I think about a month and a half before they provided the order to get direct access to the phone.

So basically the Bureau found itself in a situation where they couldn’t get whatever was on the phone over the last six weeks, because it hadn’t been backed up. And Apple said, well we actually don’t have the ability to get to that either. So by trying to get to that at that moment in time, they were saying well you need to redesign your phone so that you can get to it if we’ll need it. And Apple’s response was, well that actually puts all of our customers at risk.

IRA FLATOW: What if a court orders that Apple create software to break the phone? And that would employ hundreds of software engineers at Apple. And all those software engineers said, you know, we stand behind Tim Cook, we’re not going to do this for you.

MARC ROTENBERG: Yeah, well that’s a really interesting ethical issue, a really interesting professional responsibility issue. Because of course if you’ve devoted your life to developing techniques that help safeguard personal information and protect people from malicious hacking, and from identity theft, and financial fraud. And all of these crimes are very real, by the way, one of the top crimes in the United States right now is actually a stolen cell phone. And people steal cell phones because there’s so much valuable personal information.

So you devote your career to trying to solve that problem, and along comes your government and says well now, actually, we want you to undo what you did, because we think it’s really important for you to get access, or for the government to get access to the contents of the phone. And I think at least for some people that could easily raise some ethical concerns.

IRA FLATOW: Wouldn’t the FBI like to find a way out of going to court on this? I mean the day before they were to appear in court, suddenly someone could crack the phone?

MARC ROTENBERG: Well that’s let’s right, and as I tried to explain on your program 20 years ago, I don’t think our position– I’m not sure if I got it right now, but I’ll try again– I don’t think our position is necessarily anti-FBI or anti law enforcement. And I know sometimes people hear folks talk about privacy and they think well, they’re against law enforcement. But actually that’s not our view. I mean I think where there’s a lawful warrant, it’s absolutely important to comply with the warrant.

But I think there is a line there. And it’s very interesting, of course, when companies are in effect kind of pulled over that line, or the government tries to pull a company over the line and says, now we’re asking you to do something to create some information, to design some technique you wouldn’t normally do. And by the way, there may also be some risks to some people who are completely unrelated to the investigation. I think at that moment, a company has to pull back.

And this is also why I think what Tim Cook did here was so notable. I think he recognized that moment and said that’s really a line we can’t cross. And that was not so different, actually, from what we were talking about 20 years ago.

IRA FLATOW: Dorothy Denning, would you agree?

DOROTHY DENNING: Yeah, I would agree with Marc. But there’s also another issue at play here, because apparently some company came forward within the last few days saying that they thought they could– that they had technology that could break into the phone. And my understanding is that then the FBI basically backed down against Apple and is pursuing that.

So another question now is going to come up, is that if they’re able to succeed and find some vulnerability that they’re able to exploit is the FBI then I’m obliged to tell Apple about that?

MARC ROTENBERG: Yeah that’s a really interesting issue. That’s come up over the last couple of years with the National Security Agency, and many people in the computer security field have accused the NSA of exploiting what are called zero day exploits. Basically flaws that are out there that haven’t been publicized, and then leaving those flaws in place. Meaning, for example, that if there’s a problem in an operating system, the NSA, knowing there’s a m in the operating system, doesn’t tell the company by the way, you need to fix this.

So one of the recommendations from the presidents expert group, he pulled together a bunch of very knowledgeable people a couple years ago, and they said there really should be an obligation on federal agencies when they uncover those flaws to make sure the companies fixed them. So I think here, in an odd way, almost the best outcome would be if the FBI is able to get lawful access to the evidence it’s seeking without requiring Apple to change the device, but then actually coming back to Apple and saying this is how we did it, and now you need to fix the device.

IRA FLATOW: Let’s play this out a little bit. The FBI will give an update on their progress on April 5, I understand. Take us through both sides of this scenario? If they do find a third party, what does that mean for Apple? It means that they’re off the hook for now?

MARC ROTENBERG: Well If the FBI is able to get access to the information without going back to court, I’m sure that’s their preferred option at this point. If the FBI finds that it can’t get access to the information, it certainly has the option of going back to court and trying to compel Apple to make the engineering change that the Bureau had sought originally.

Now of course they also run a risk. Because they could go into court, have that legal dispute, and end up losing it. So that would be a set back as a matter of legal precedent.

But what I said a moment ago, which I think is a very interesting twist in the current matter, is that if the FBI is able, with the help with a third party to get access to the information, no need to go to court. But there’s still another step, which is that they actually should go back to Apple and fix whatever flaw was identified by the third party so that iPhone customers will not, in the future, run the risk that someone else, not acting with a lawful warrant, will gain access to the information on their iPhone.

IRA FLATOW: Is the FBI, when it goes to court, does it have to say to the judge, we have exhausted every possibility?

MARC ROTENBERG: Well they did actually for that March 22 hearing that was postponed. I mean that was a big part of the argument. They were saying, in fact, that they had tried everything and they had just run out of options. And that was part of the reason that they had asked the court to compel Apple to make the changes to assist in the investigation.

Now subsequently, they apparently have found some other means they think may work. But they do have the option to go back to court. And that, of course, could be very interesting if they decide to do that.

IRA FLATOW: Could they ask the NSA for help?

MARC ROTENBERG: Well, I think they could. It’s a very funny thing about the NSA. Personally I don’t think the NSA should have a role in the domestic surveillance. I think the members of Congress who pursued the church hearings in the 1970s, and eventually passed the Foreign Intelligence Surveillance Act, would really be horrified to imagine that the NSA today is playing the role that I suspect is playing in domestic surveillance.

But certainly the one thing that the NSA could do is to provide the technical assistance to the domestic law enforcement agency, which is the FBI, to gain access to the evidence that the FBI has properly in its possession. That kind of technical assistance role is in a presidential executive order. In a way I think it’s the least intrusive type of activity that the NSA could engage in.

DOROTHY DENNING: Dorothy Denning, do you agree? Yeah that seems reasonable.

IRA FLATOW: And so let me go to the phones. Let’s go to of Denver, Colorado, let’s go to A.C. there. Hi, welcome to Science Friday.

Are you there in Denver?

PHYLLIS: Did you mean Phyllis?

IRA FLATOW: I’m sorry, my screen area has gone a little wacky, so maybe, yes Phyllis.

PHYLLIS: Hi there. I’m a low-tech news junkie. And my question, I guess, you might say, is why haven’t we heard the discussion about what Apple doesn’t hack their system themselves? And then just give the data to the FBI. I mean have they already had that discussion? And then they could control it?

IRA FLATOW: Yeah OK, let’s ask that. Why give the phone over physically, why not have Apple hack it itself, keeps the data to itself, and then gives of the data over to the FBI.

MARC ROTENBERG: That scenario actually has been talked about quite a bit, and partly it seems almost intuitive that somehow Apple’s able to do that. But as Apple has explained in a lot of detail, and I think a lot of other technologists have called corroborated, it is such a contrary activity for a company that’s focused on security.

It’s kind of like asking an auto manufacturer to design a car that will do maximum damage upon collision. And your entire company is based around the premise that you’re trying to build cars that are as safe as possible. And if you have to go back and imagine to yourself, how do you make the car more vulnerable in a collision, you’re going down a road that could easily create risk to others. And I think that’s a big part of the reason Apple is very reluctant to go down that road.

You know, the security is so strong here. Sometimes people miss this. It’s not just that the FBI can’t get access to the phone, the phone is actually designed so that Apple can’t get access to the phone. And they did that purposefully. They did that because I think they concluded that if even they had the ability to get access to the phone, it would leave their customers at risk from malicious hackers who could do some real damage.

IRA FLATOW: I’m Ira Flatow, this is Science Friday from PRI, Public Radio International. Talking about, when we’ve moved on to the Apple, if you’ve just joined us, the Apple FBI case. Let me go take another call from the phones, here. Let’s go to James in Port Republic, Maryland. Hi James.

JAMES: Hi, good afternoon.

IRA FLATOW: Hi there.

JAMES: Thanks for taking my call. So my question is, what kind of jurisdiction would the FBI have for other electronic products such as cell phones that are made in other countries that are sold in the US, such as Samsung phones–

IRA FLATOW: Oh, he dropped out of his car phone, but that’s a good question. What could happen there? Can you force a foreign company?

MARC ROTENBERG: That’s an interesting question, that’s starting to sound like a law exam question, maybe. I mean, I imagine a US law enforcement agency would have somewhat less influence over a foreign corporation, but of course a foreign corporation is present in the US, so they’re accountable to the US and the US legal system.

I think what the question also raises, which we haven’t talked about yet, but probably a key part of the discussion. Is if Apple does agree to go along with the FBI, what happens when a foreign law enforcement agency, you know whether it’s in China, or in France, or somewhere else, says to Apple well a whole bunch of our customers in our country are using your phones, and now we need to get access in the course of a criminal investigation, and we need you to do for us what you did for the FBI. I don’t see a principle basis in that situation for someone like Tim Cook to say, well we could do it for the FBI, but we can’t do it for you.

IRA FLATOW: Is Tim Cook waiting to be ordered by a judge to do this, and then go along with it? Or could he be held in contempt of court if he refuses?

MARC ROTENBERG: Well I do think what he’s done up to this point has really been a bit courageous. There are a lot of ways that companies could deal with a situation like this that wouldn’t require the CEO getting out in front, and being on national TV, and trying to explain to their customers why they were doing something that really wasn’t about profitability. But it was simply a social position the CEO feels strongly about.

I think from his perspective, that for the FBI to conclude that it doesn’t need Apple to design it’s phone to make it less secure is probably the best outcome. But I also suspect it probably won’t settle the matter. I think maybe 20 years from now we’ll be doing this radio program again.

IRA FLATOW: I certainly hopefully we will be, and hope we’ll all be around here. Dorothy, any last thoughts on this before we say goodbye?

DOROTHY DENNING: Well just a plea. There are so many security problems right now. I mean there’s so much crime taking place on the internet, and so on. So a plea for better security on all our products. I’m especially concerned when I read about people hacking cars, and hacking other vehicles, and hacking into health care systems, and holding data and hospitals hostage, and everything else. So we really need to focus more on security.

IRA FLATOW: Yeah, well we will. And those are great topics for us to take up in the next 25 years. This has been part of our 25th anniversary celebration, bringing back golden oldies, as you say, Marc, from the past. And we’re doing that very happily all year this year.

Thank you, both of you, for taking time to be with us today.

MARC ROTENBERG: Nice speaking with you.

IRA FLATOW: Marc Rotenberg is the president of the Electronic Privacy Information Center in Washington. Dorothy Denning, distinguished professor, Department of Defense Analysis at the Naval Postgraduate School in Monterey, California.

Copyright © 2016 Science Friday Initiative. All rights reserved. Science Friday transcripts are produced on a tight deadline by 3Play Media. Fidelity to the original aired/published audio or video file might vary, and text might be updated or amended in the future. For the authoritative record of ScienceFriday’s programming, please visit the original aired/published recording. For terms of use and more information, visit our policies pages at http://www.sciencefriday.com/about/policies.

Alexa Lim was a senior producer for Science Friday. Her favorite stories involve space, sound, and strange animal discoveries.